The NameNode is the most important machine in HDFS. The NameNode and DataNode processes can run on a single machine, but HDFS clusters commonly consist of a dedicated server running the NameNode process and possibly thousands of machines running the DataNode process.

The architectural design of HDFS is composed of two processes: a process known as the NameNode holds the metadata for the filesystem, and one or more DataNode processes store the blocks that make up the files. After a few examples, a Python client library is introduced that enables HDFS to be accessed programmatically from within Python applications. This chapter begins by introducing the core concepts of HDFS and explains how to interact with the filesystem using the native built-in commands. Block-level replication enables data availability even when machines fail. The default replication factor is three, meaning that each block exists three times on the cluster. HDFS ensures reliability by replicating blocks and distributing the replicas across the cluster. Files made of several blocks generally do not have all of their blocks stored on a single machine. Individual files are split into fixed-size blocks that are stored on machines across the cluster. This is accomplished by using a block-structured filesystem. HDFS is designed to store a lot of information, typically petabytes (for very large files), gigabytes, and terabytes.

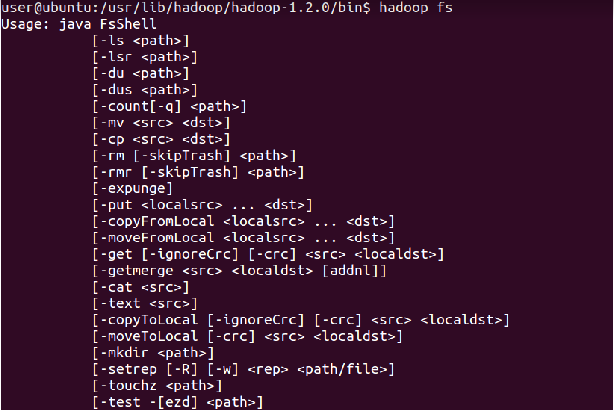

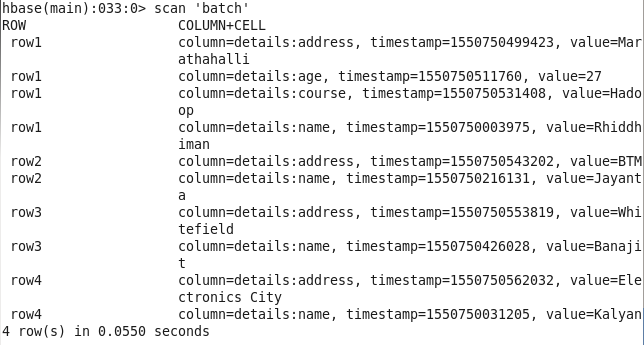

Where HDFS excels is in its ability to store very large files in a reliable and scalable manner. Like many other distributed filesystems, HDFS holds a large amount of data and provides transparent access to many clients distributed across a network. The design of HDFS is based on GFS, the Google File System, which is described in a paper published by Google. The Hadoop Distributed File System (HDFS) is a Java-based distributed, scalable, and portable filesystem designed to span large clusters of commodity servers. Read file on hdfs using cat hdfs dfs -cat /sample/a.Chapter 1. rw-r-r- 1 hduser supergroup 11 00:55 /sample/a.txt List inserted file on hadoop dfs hadoop fs -ls /sample/ĭrwxr-xr-x - hduser supergroup 0 23:53 /sample/a Put command copies the file or directory from the local file system to the destination of hadoop file system dfs.Įx: Insert text file a.txt to hadoop dfs directory /sample/ hdfs dfs -put a.txt /sample/ Create directory on Hadoop file system on Hadoop 3.1.1 Ubuntu 18.04Įx: Create new directory sample on hdfs hdfs dfs -mkdir /sample hadoop fs -ls /ĭrwxr-xr-x - hduser supergroup 0 01:51 /hadoopĭrwxr-xr-x - hduser supergroup 0 23:46 /sampleĪbove command creates sample directory at root “/” location. Output drwxr-xr-x - hduser supergroup 0 01:51 / hadoopĭrwxr-xr-x - hduser supergroup 0 23:53 / sampleĭrwxr-xr-x - hduser supergroup 0 23:53 / sample/ a You can list directory by using browser GUIĬlick url Click on Utilities -> Browse the file systemģ. List Directories in HDFS on Hadoop 3.11 – Ubuntu 18.04ĭrwxr-xr-x - hduser supergroup 0 01:51 / directory is listing This Hadoop fs command behaves like -ls, but recursively displays entries in all sub directories of a path hdfs dfs - ls - R / It’s clearly showing hadoop version 3.1.1 2. Output Hadoop 3.1.1 Source code repository -r 2b9a8c1d3a2caf1e733d57f346af3ff0d5ba529c Compiled by leftnoteasy on T04:26Z Compiled with protoc 2.5.0 From source with checksum f76ac55e5b5ff0382a9f7df36a3ca5a0 This command was run using /usr/local/hadoop/share/hadoop/common/hadoop-common-3.1.1.jar Check Hadoop version on Ubuntu 18.04 or Find Hadoop Installed Version

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed